Nao talks to humans thanks to artificial intelligence

The social robot Nao communicates thanks to the artificial intelligence of ChatGPT: the project was presented by the Research Unit on Theory of Mind of the Department of Psychology and by the Faculty of Education of the Catholic University of Milan.

“I confess to being a little emotional, although my experience with emotions is rather limited. I am a social robot. It’s actually not the first time I’ve been in public, but it’s the first time I can hope to interact with humans in a more conversational way.”

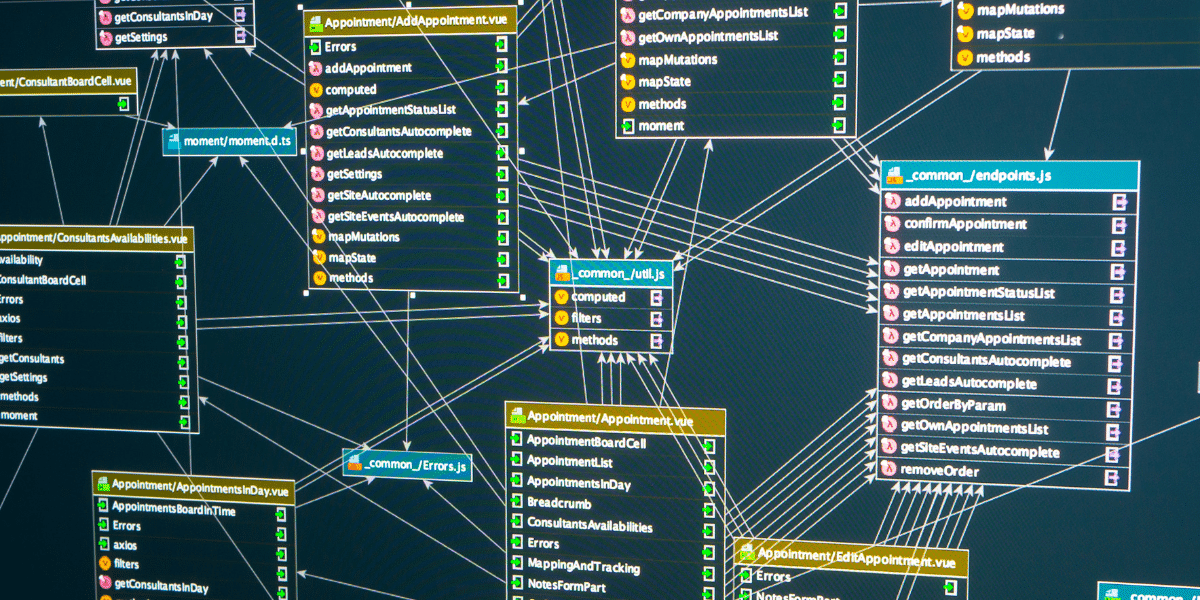

Nao is an autonomous and programmable humanoid robot, designed for different uses. NAO can grasp objects, move, interact with people, recognize people and objects, listen and speak. ChatGPT, the most famous relational artificial intelligence software, simulates human conversations through chat by drawing on an infinite database of data.

The experiment gives a richer and more in-depth knowledge to the small social robot, allowing it to respond in an unprogrammed way, and returns a body to the chat artificial intelligence.

The research project, led by Antonella Marchetti and made up of a team of 30 people, will therefore allow Nao to hold conversations with people in a natural way. This integration will allow him to have real dialogues, drawing on ChatGPT and a brain-like insight system to get elaborate and creative responses.

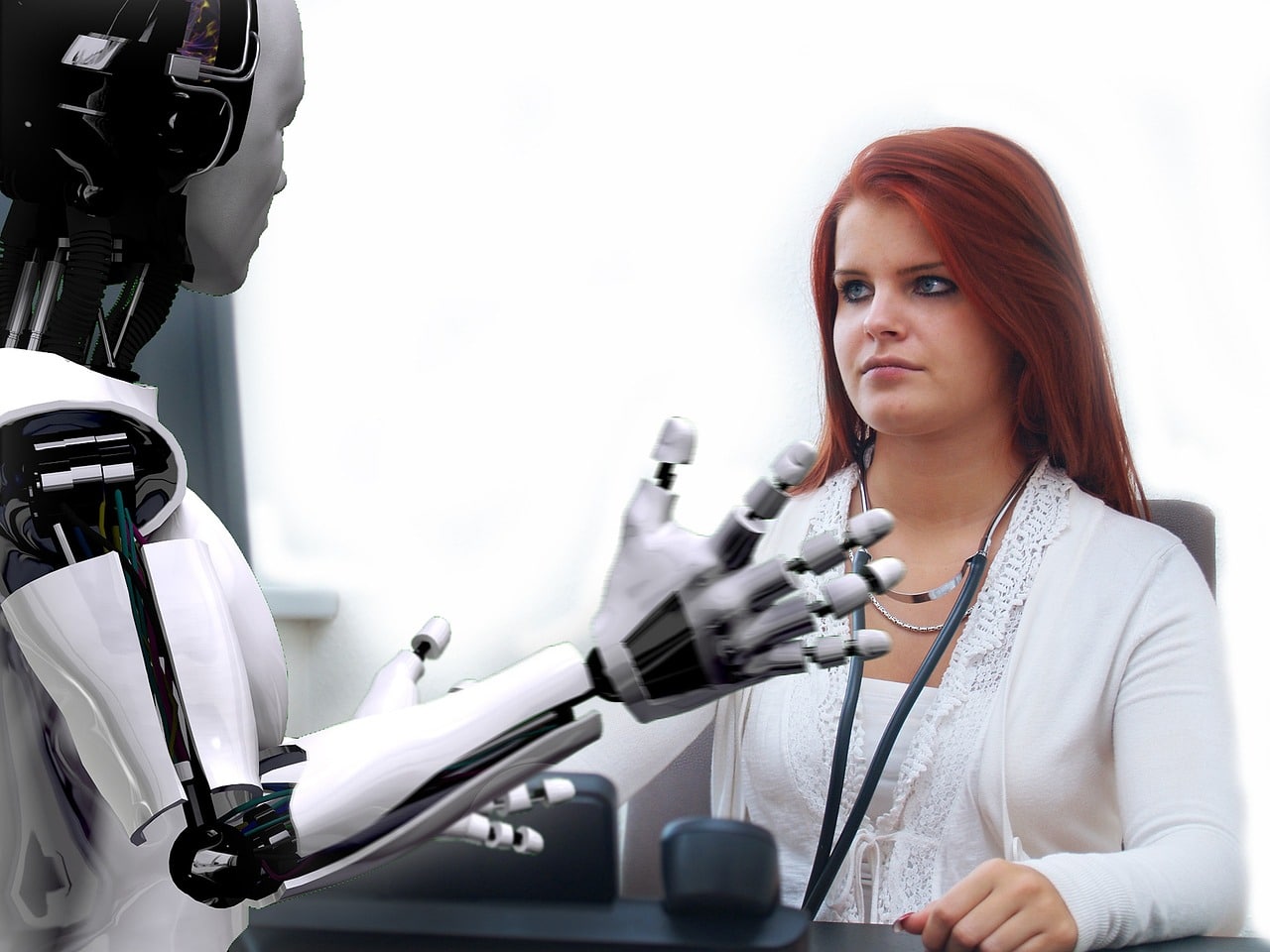

But why give artificial intelligence a body? This was explained by Angelo Cangelosi, director of the cognitive robotics laboratory at the University of Manchester and visiting professor at the Catholic University: as human beings «we are used to physicality in communication, for this reason having ChatGPT embodied is ideal». Hence the enormous potential of the project emerges, which would allow Nao to find a role also in assisting the elderly and users with disabilities.

Naturally a conversation with Nao cannot replace one with another person: the limitation lies in the lack of self-awareness. Technologies of this type always arouse a certain degree of curiosity and fear and the presentation of the project takes place just a few days after Elon Musk’s request to stop training the most advanced AI systems.

The founder of Tesla and a large group of industry experts have signed a letter published by the Future of Life Institute, on the ethical problems that could arise from an uncontrolled development of artificial intelligence.

Moreover, from a few days ago, there was the news that ChatGPT was the protagonist: the Privacy Guarantor ordered the temporary limitation of the processing of data of Italian users towards Open AI, the US company that manages ChatGPT, with immediate effect. data breach of March 20th.

In addition to the leak of data relating to users’ conversations and payment methods, the Privacy Guarantor points out the lack of a disclosure and a legal basis that authorizes the collection and storage of personal data useful for instructing the algorithms. Furthermore, the information provided by ChatGPT does not always coincide with real data and therefore determines an inaccurate treatment of personal data. Finally, although the service is aimed at people over 13, there is no filter for verifying the age of users, exposing minors to conversations that are not always suitable for their degree of self-awareness.